DAWN OF THE COMPLEXITY PARADIGM

INTRODUCTION

Through the various crises in recent decades, it has become clear that the world is fundamentally uncertain – and not stochastically uncertain. With the new insights based on, among other things, complexity theory, it starts to dawn in science and in practice, that we need a greater diversity of models and tools. From Agent Based Modeling and network theory to premortems and scenario thinking. These will enhance financial risk management practices.

In addition, decades of cognitive research have taught us that there are very effective methodologies for extracting much more information from a group of professionals and mitigating the impact of human biases. The decision-making process itself is an important toolkit for improving risk-return decisions. Both within the financial world and beyond.

However, financial education as well as practice within financial institutions and rules used by supervisors are far from fully adopting these new insights. This article is a small tour through the past, the current state of education and practice and the expectations for the future of the finance profession. The present article argues that these insights, including those arising from behavioral research and complexity theory, lead to requirements for a broader and more diverse arsenal of competences for a financial professional ‘fit for the future’.

DECADES OF OVERESTIMATING OUR ABILITIES

Since the 1990s, there has been a strong trend within risk and portfolio management towards steering financial institutions and markets based on well-established statistical methods, such as Value at Risk and associated stochastic modeling of the balance sheet risks of banks, insurers and pension funds. The reason for this is the underlying ideology of neoclassical equilibrium modeling based on the axioms of rational agents in a world that always tends towards equilibrium. The underlying worldview for managing risks seemed to be aimed at “being in control”. A mechanical worldview in line with our Cartesian Newtonian school education from the ‘hard’ sciences like physics. The key assumption underlying this approach is that our economic and financial world is based on stable mechanical (stochastic) processes that we can measure and control. This is actually the case in many sectors. Aircraft can fly more safely, driving became safer and nuclear reactors can supply electricity more safely. These are top-down processes with stable cause effect functions. We often categorize these types of processes as “complicated processes”. It takes a lot of specialist knowledge to understand them, but we can measure and control the risks. For this category of processes, risk management entails the measurement and “bringing under control” of fatalities.

Since the 1990s, there has been a strong trend within risk and portfolio management towards steering financial institutions and markets based on well-established statistical methods, such as Value at Risk and associated stochastic modeling of the balance sheet risks of banks, insurers and pension funds. The reason for this is the underlying ideology of neoclassical equilibrium modeling based on the axioms of rational agents in a world that always tends towards equilibrium. The underlying worldview for managing risks seemed to be aimed at “being in control”. A mechanical worldview in line with our Cartesian Newtonian school education from the ‘hard’ sciences like physics. The key assumption underlying this approach is that our economic and financial world is based on stable mechanical (stochastic) processes that we can measure and control. This is actually the case in many sectors. Aircraft can fly more safely, driving became safer and nuclear reactors can supply electricity more safely. These are top-down processes with stable cause effect functions. We often categorize these types of processes as “complicated processes”. It takes a lot of specialist knowledge to understand them, but we can measure and control the risks. For this category of processes, risk management entails the measurement and “bringing under control” of fatalities.

However, a large part of all the processes that we deal with within economics, especially finance, are of a fundamentally uncertain nature. This applies to most market risks, business risks, but also money laundering and various operational and cyber risks. We call this “complex processes”. These are essentially different in nature from “complicated processes”. The cause behind this lies in the fact that there are no clear causal top-down relationships in complex systems; instead, there are feedback loops and changing levels of connectivity between institutions, countries and so forth. Feedback loops arise in part because of what George Soros calls “reflexivity”: the relationship between individuals and the market as a whole in which “behavior” plays a major role. A person who at one given moment considers buying a certain asset such as a house, with an x% probability, can (unconsciously) adjust preferences due to an increase in the market prices, whereby the probability of buying that asset changes to y% (y>x), thus influencing the market outcomes. As a result, the preferences of many other people change and so-called feedback loops arise because of these reflexive relations between the market and people. Small initial changes in, for example, buying behavior can bring about enormous changes at the macro level through various selfreinforcing (positive) feedback loops.

AS LONG AS CHANGING PREFERENCES AND RESULTING FEEDBACK MECHANISMS ARE NOT INCLUDED, ECONOMIC MODELS CONTINUE TO MISS THE ESSENCE OF REAL-LIFE ECONOMIC SYSTEM BEHAVIOR

In addition, market dynamics change because of changes in connectivity. For example, this can occur through new trade agreements, inter-bank loans, a reduction of trading activities by banks and mandatory Central Clearing Platforms. And dynamics change through innovations. Examples include less capital-intensive industries and growth in products such as Exchange Traded Funds. This set of feedback loops, innovations and connectivity changes leads to a continuous change in the dynamics of financial markets, resulting in fundamental uncertainty. This is a world where we cannot measure equilibria (because there are often none at all) nor estimate probability distributions. We do the latter, but they have little practical – and sometimes even very misleading – value in a complex world. Bernard Shaw’s quote “Beware of false knowledge, it is more dangerous than ignorance” sums this up well.

COUNTERPRODUCTIVE WORLDVIEWS

A well-known example of the counter productiveness of modeling is the failure of financial models to measure bank stability prior to the 2007/2008 Global Financial Crisis (GFC). Due to the very low market volatility – and very low correlations – these models didn’t signal that the risks had become very high. According to conventional academic reasoning and many financial professionals in the field, low market volatility had to imply low risk. Otherwise, the vast majority of the market would be irrational, which was inconceivable in the presumed world of the (predominantly) rational men. This arose despite the fact that people like Minsky (1986) warned about the existence of collective irrationality. The focus was also too much on the micro level: What is the risk per institution? However, risk can only be measured when it is connected to the environment: What are the relations between institutions and how do risks pass through a system? Indeed, reductionism oversimplified the world and a holistic system view was lacking.

A second example of the destructive effect of ideological models without any scientific foundations is the mean reversion interest rate model. This model assumes that interest rates have a strong tendency to revert to a kind of equilibrium level (the “mean”), which for decades attributed a nil chance to interest rates below 2%. This resulted in inertia from insurers and pension funds, many of whom misjudged their risks because of this ideology. Low interest rates fuel demand and discourage saving while driving up interest rates again through several clear causal relationships. This was the ideology of mainstream economists. With a complex world view, on the other hand, we know that the probability of very low interest rates cannot be quantified, but it is easy to imagine how behavior-driven feedback loops, changes in the environment and innovations could lead to very low interest rates. A little imagination teaches us that long-term low interest rates can create a realization in people that one must save more money if one’s future income is to remain the same. The income effect, as it is called, slowly starts to dominate the substitution effect. Although initially, low interest rates breed more demand in the economy and result in fewer savings, this can reverse after a while. Central banks – in which the naive assumption that low interest rates should always lead to more spending – are going to shout even louder that interest rates should remain low; as a result, people start saving even more instead of less. The positive feedback loop is in full swing and interest rates are in a trap. This is not necessarily the (only) cause. Innovations that make companies less capital intensive, et cetera, could also be imagined. Many insurers and pension funds that adhered to the ideology of mean reversion were badly hit the last three decades because they had not taken protective measures against a disastrous fall in interest rates. This meant that inflation adjustments (indexation) were no longer possible and many pension funds had to close their funds all together.

RELEVANCE OF HUMAN BEHAVIOR RECOGNIZED

Complex processes do not have a simple cause-effect relationship while feedback loops very often cause systems to spiral out of balance. Endogenous (behavior-driven) processes lead to unstable situations, chaotic crises and – in between – temporary states of stability. Therefore, we can speak of navigating “between order and chaos”. Endogenous processes within people’s social networks are driven, among other things, by a combination of emotions and cognitive biases. Describing these combinations exceeds the scope of the current article, but the components, including overconfidence and confirmation bias, have been extensively studied since the 1970s by, among others, Kahneman (2000). Also, the so called “affect heuristic”, in which feeling good about long-term positive markets reduces our perception of risk (Slovic, 2000) and fear-related emotions, such as loss aversion, which includes the fear of missing out, play a major role. Moreover, the human ability to collectively generate stories that gain traction through epidemic diffusion processes is an important part of endogenous imbalance. Scientific developments in this area have been described in particular by Shiller (2019).

These biases and heuristics have been acknowledged by almost all economists, but many of them are not yet ready to recognize that this can lead to unstable feedback loops. Or they do recognize this, but try to get a little closer to reality with small adjustments in their equilibrium models. This has been called the “shoehorn” approach: Try to force some refuted theory into something that looks more plausible, even if it does not fit. However, as long as changing preferences and resulting feedback mechanisms are not included, economic models continue to miss the essence of real-life economic system behavior.

COMPLEXITY THEORY MEETS BEHAVIORAL SCIENCE: DAWN OF A NEW PARADIGM

As Kuhn (1962) argued, paradigms that seem to be failing will only really succumb if there is a new paradigm to replace them. In economic science, especially in investment theory, the emergence of behavioral finance is not sufficient as a new workable paradigm. It is not a replacement framework for how the economy including financial markets works. It erodes the paramount assumption of Homo Economicus in conventional finance, but no new form of modeling the micro or macro economy has been proposed. However, the – coincidentally parallel – development of Complexity Theory over the past 30 years does provide the building blocks for a new paradigm2: of emergent processes, of connectivity and of interaction (feedback loops et cetera) which are more important than studying the static particles of the system itself. This theory is also increasingly applied to economics, as Arthur (2013) summarized in an overview.

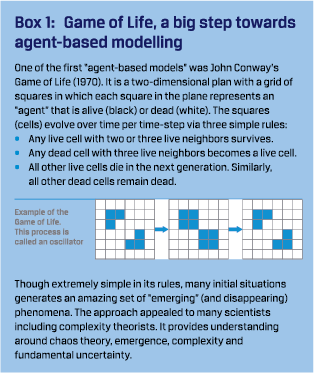

AGENT-BASED MODELS

Armed with the knowledge of behavioral finance, from the first decade of the twenty-first century, the first steps were taken to incorporate behavioral aspects into the modeling of the (financial) economy. For example, it was possible to incorporate into models how the behavior of groups of “agents” (institutions, households, etc.) adapts to certain developments in the economy and/or financial markets. A kind of “learning process” which does not necessarily have to be rational. For example, a sustained period of rising markets will make more people “learn” that markets will most likely continue to rise. A trend-following heuristic. As another example, an experience like persistent low interest rates might entail that the income effect gradually comes to dominate the substitution effect. In any case, learning is never “perfect”. Our process of “learning” is based on simple heuristics that differ from person to person. As the economy changes, we adjust our heuristics. It follows from experiments that large groups follow these heuristics-based learning rules. This makes it possible to make models of heterogeneous groups of dynamic, adapting agents, and here, simulation provides a great deal of insight into the origin of market behavior. Unfortunately, a beautiful, closed form solution is infeasible as the output of complexity models. Examples of these simulation models are the dynamic macro-economic models by De Grauwe (2012) and Hommes (2019), among others, which provide us with a better understanding of endogenous instability and the resulting fat tails in macro-economics.

Armed with the knowledge of behavioral finance, from the first decade of the twenty-first century, the first steps were taken to incorporate behavioral aspects into the modeling of the (financial) economy. For example, it was possible to incorporate into models how the behavior of groups of “agents” (institutions, households, etc.) adapts to certain developments in the economy and/or financial markets. A kind of “learning process” which does not necessarily have to be rational. For example, a sustained period of rising markets will make more people “learn” that markets will most likely continue to rise. A trend-following heuristic. As another example, an experience like persistent low interest rates might entail that the income effect gradually comes to dominate the substitution effect. In any case, learning is never “perfect”. Our process of “learning” is based on simple heuristics that differ from person to person. As the economy changes, we adjust our heuristics. It follows from experiments that large groups follow these heuristics-based learning rules. This makes it possible to make models of heterogeneous groups of dynamic, adapting agents, and here, simulation provides a great deal of insight into the origin of market behavior. Unfortunately, a beautiful, closed form solution is infeasible as the output of complexity models. Examples of these simulation models are the dynamic macro-economic models by De Grauwe (2012) and Hommes (2019), among others, which provide us with a better understanding of endogenous instability and the resulting fat tails in macro-economics.

Farmer (2009), one of the fathers of complexity economics, has created many agent-based models. One of these is a model in which hedge funds, banks, regulators and investors interact with each other. This model explains, among other things, how regulation can lead to unintended positive feedback loops and instability

BROADER APPLICATION OF NETWORK THEORY WITHIN FINANCE

A variant that is somewhat related to agent-based modelling is the spectrum of network models. In this, agents are the nodes in the network. The relationships (loan volume between banks, derivative contracts between institutions, etc.) are represented by the connections (edges) between the nodes. Shocks in the market can be steered through such a network, providing insights into which players are the “central culprits” that can cause the system to collapse. A good example is the “DebtRank” network approach of Battiston (2012) which shows that, for example, two banks of the same size, can have totally different systematic impact on the system as a whole. This replaces the “micro” thinking of “too big to fail” with the “system” thinking of “too central to fail”. Another illustrative approach is Borovkova’s (2013) Central Clearing Platform (CCP) network model. This research reveals that a CCP is not more secure than a bilateral clearing approach for all institutions in the network, depending on where in the network an institution is located. This contrasts the “micro” approach in which the dynamic effects within the system are ignored and the sum of micro risks naively determines the macro risk.

CALL FOR MORE PLURALISTIC THINKING

It is not yet possible to say how quickly this more pluralist approach, applying a wider variety of models instead of relying on one ideology, in education and in practice will become commonplace. On the internet, we do see an increase in the word use of these new modelling approaches. However, not much can be concluded from this, because much of the terminology is also fairly new.3 An increasing number of international research and training institutes related to complexity and complexity economics have emerged.4 Institutes such as Rethinking Economics, which pushes for greater diversity in economic education worldwide, have examined the curricula of various universities. They found that in the Netherlands, for example, 86% of all education is still based on conventional “neoclassical” models. Unfortunately, they have only just started measuring in Europe, so they cannot show yet if progress is being made across the continent. So far it is clear that students worldwide are now also demanding much more pluralist real-world educational programs.

Asset managers, banks and regulators are also paying increasing attention to network models and other forms of risk modeling and are collaborating with the new complexity research institutes. However, most of the financial models that are used are still of the classical “equilibrium” type. Change is happening very slowly. Not in the least because these classical models are the prescribed supervisory models and they require an enormous time commitment within financial organizations.

PROGRESS: UNDERSTANDING AND CREATING RESILIENCE INSTEAD OF PREDICTING AND CONTROL

Scientific fields such as data science and artificial intelligence produce an enormous amount of new knowledge. And computers are still getting faster. All these developments give people hope that we will be able to model risks better.

These developments will certainly bring progress in several areas, such as better understanding of idiosyncratic aspects of consumer credit risk, security risks in various physical projects and cyber-attacks in which global data can recognize repetitive patterns.

However, the negative side of new knowledge, faster computers, better data when dealing with a complex environment such as the financial world, can largely be summarized in one word: overconfidence. Once we were able to solve long-term Stochastic Dynamic General Equilibrium (SDGE) models with modern computers, this led to the remark by Nobel Prize winner Robert Lucas in 2003 in his speech to the American Economic Society (Lucas, 2003): “The problem of depression prevention has been solved”. This belief of “superior knowledge” came just a few years before the worst economic depression since 1929. The combination of modeling and computing power made the world so overconfident that it had created the most unstable economy since the 1920s.

SELF-ORGANIZED CRITICALITY AND THE THEORY OF UNPREDICTABILITY

However, even if complex processes would be much better understood by new models as discussed above, the predictability of a potentially high-risk event would still remain very low. This can be explained by the research on so called “self-organized criticality”. Many processes in the natural sciences, ecology and social sciences, have the property that behavior around especially so-called tipping points (highly built-up tension) is very erratic, non-linear and unpredictable. This is despite the fact that the building blocks themselves are (often) very predictable in their behavior.

We can understand quite well how tension is built, for example instability in financial markets or the tension in tectonic plates preceding an earthquake. However, we do not know when this increased tension will lead to a meltdown. This process of inherent unpredictability, which is closely linked to Mandelbrot’s Fractal Theory, is often explained in terms of sandpiles. If you build a pile of sand on a beach using grains of sand in your hand, that pile can collapse at a height of 20 centimeters, 40 centimeters or even as high as a meter or more. The difference in the number of grains of sand that come down in an “avalanche” is enormous. The timing is virtually unpredictable. Of course there is a smaller chance that a sandpile will make it to a very high altitude before it collapses, however, this decrease in probability follows a kind of power law distribution.5 The probability of extreme outcomes is still significant under power law and does not converge quickly to 0 as it would with normal distributions.6 It is these extreme outcomes with outsized, often negative, impact that should not be neglected by finance professionals and supervisors or classified as “too unlikely to occur”.

However, if the self-organized criticality of a sandpile is unpredictable, even though the behavior of each grain itself is highly predictable, how large is the unpredictability of humaninduced “sandpile phenomena” such as financial markets? Every person is subject to behavioral changes when a system changes. This often creates destabilizing (positive) feedback loops and subsequently greatly reduces predictability. Consequently, forecasting is impossible for most interactive economic situations. This is the notion of fundamental uncertainty. As Keynes (1937) noted: “There is no scientific basis to form any calculable probability whatever. We simply don’t know”. Despite a more than poor track record, many economists continue to see forecasting as a socially meaningful activity.

So, we have to get used to using a diversity of models combined with broad perspectives to find a robust and adaptive solution that provides a reasonable outcome under different world views. Robust solutions ensure that one can survive shocks. For example, pension funds and insurers cannot gamble on the surmise that “mean reversion exists”. They also need to survive if mean reversion doesn’t exist and hedge some of their downside interest rate risk. They can also make risk hedging dependent on developments in interest rates, inflation and other variables.This will allow them to develop conditional plans that are adaptive if the environment changes structurally. By having knowledge about a diversity of models and imaginary world views, including actions to be taken, it is possible to respond more quickly to a changing environment, changing connectivity, changing technology and emerging feedback loops. This is more effective than optimizing under just one worldview and seeing one’s financial institution fail if their worldview is false.8 9Allowing imaginable calamities to happen while being unprepared and then attributing them to “bad luck” is not a responsible policy

RESILIENCE ENGINEERING: ORGANIZATIONAL DESIGN AND LEARNING CAPACITY

Thinking about complexity does not only lead to a different use of (a diversity of) models. Being aware of fundamental uncertainty and the relevance of the non-linear dynamics of a complex system also leads to a different view of, among other things, the design of organizations and learning within organizations. This is often summarized under the term “resilience engineering”. Efficiency, optimization and centralization need to be less glorified as ultimate goals and more balanced with those elements that are more effective in a fundamentally uncertain world, such as redundancy, flexibility and diversity.

EFFICIENCY, OPTIMIZATION AND CENTRALIZATION NEED TO BE MORE BALANCED WITH REDUNDANCY, FLEXIBILITY AND DIVERSITY

If we know exactly how the world works, we can steer institutions with minimum inventory, minimum capital, minimum waiting times. However, in a complex world, a lack of redundancy often proves to destabilize the entire system. Bank capital shortages during a financial crisis and intensive care capacity shortages during a pandemic are examples of poor redundancy management in a complex environment.

Unfortunately, redundancy alone is not the (only) solution in a complex world. It is hard to say how much redundancy is enough in a fundamentally uncertain world. This implies that one must use other design tools as well. Instead of having an infinite amount of equity capital, a bank can also deal flexibly with its loan capital (convertibles) and make solid agreements about how other debt securities are written off in the event of a (near) default. In a complex system, the default of a financial institution is in most cases favorable to the (still common) practice of keeping them alive with lots of government support. Bankruptcies are part of a healthy economy. Setting up a tense system to prevent bankruptcies at all costs will make all institutions become increasingly homogeneous in their structure and behavior because of very strict, unambiguous regulations. Identical balance sheet construction and “exit” strategies in case of a crisis ensure that the connectedness of the system becomes extremely high. Reducing risks at the micro level leads to increasing system risks at the macro level. This applies to regulations, to CCPs, to monetary policy worldwide and so on. Centralization and lack of diversity create dangerous unstable systems because of a “control” tendency. Therefore, diversity in the strategies of institutions is a great asset if systemic risk is to be kept low. More principle-based and less rule-based regulations fit in with this, among other things. The publications by De Haan (2019) and Broeders (2018) have shown that regulators are increasingly aware of this. However, so far it is often only a few people within these institutions who really have this micro-macro paradox on their minds.

Learning is also different in a “complex” world than in a “complicated” world. A complicated worldview assumes stable processes and when mistakes are made, it is often investigated who made the mistake. Dismissal or better training are logical actions from the perspective of this worldview. In a complex world, people think more in terms of understanding changing systems, where the system must be (re-)organized in such a way that human errors have limited consequences. The studies by Hollnagel (2006) and Dekker (2017) have shown that the root cause of most disasters does not lie in individual human errors, these are just symptoms. Instead, they lie in a complex system that, through a drive for “optimization” and at the same time a quest for “zero -risk” (of the known, small risks) makes itself more fragile and more sensitive to calamities. “Drift into failure”, is how Dekker (2017) described the endogenous processes that make complex systems such as companies less safe. In a complex worldview, from the bottom up, people play a major role in helping to realize a better design. A lack of learning in a financial organization about the system as a whole and too much focus on managing from the top-down and preventing small local risks, here hoping that large risks will not materialize, often have the opposite effect.

Thus, complexity thinking is not only useful for understanding complex financial markets, but also for understanding complex adaptive financial institutions and companies that operate in an equally complex external environment.

By analyzing complex processes in financial institutions, risk management and compliance can better understand how certain rules may reduce risks at the micro (silo) level, but lead to increased risks at a macro (company) level. For example via the impact on other departments (workload, lead time, pressure on customer service) leading to fraud or other forms of “rule insubordination”. With all the associated feedback loops. There are several known cases – without going into the names – of signature forgeries as a result of compliance-related long lead times and customer burden that employees found embarrassing and unacceptable. Unfortunately, the solution was often to fire these people instead of changing the system. Viewing a company as separate departments and not as a system is just as dangerous as an equilibrium model in the economy that ignores endogenous change.

Again, supervisors should also embrace a system approach. Currently they contribute strongly to a silo approach, imposing rules per risk factor without a system view and, with their many rules, ensure a homogeneous landscape of financial institutions and a fragile ecosystem.

A GOOD DECISION-MAKING PROCESS DOMINATES A GOOD ANALYSIS

The influence of the growing insights in the cognitive field of behavioral finance and behavioral economics on the finance professional goes beyond including human behavior in agent based and other models. Human behavior is not only observed to better understand how the world works. The knowledge about pitfalls and noise in our decision-making process – both at the individual level and group level – can also be directly incorporated into improving the way we make decisions in those organizations operating under fundamental uncertainty.

We have realized that our risk perception and the entire decision-making process suffers too much from a large set of biases. Extensive research by, among others, Tetlock (2015) and Lovallo (2010) has revealed that groups of amateurs who follow a thorough process make better estimates of the expected outcomes and better decisions than individual top specialists (the so-called ‘experts’). Lovallo (2010) even concluded that the quality of the process has a six times greater effect on the quality of a decision than the quality of the analysis. Broader thinking by individuals and balanced group processes can, when combined, lead to significantly better decision-making processes.

The most effective approach is to use tools that prevent individual and group biases. Thus, the process of working towards a decision is structurally an important instrument in producing sound decisions in the context of the company’s objectives and risk appetite.

Tools to assist individuals and the group think more broadly and not to fall into pitfalls such as overconfidence, confirmation bias, affect heuristics and Groupthink include:

- Scenario thinking in the broad sense of the word (Van der Heijden, 2004),

- The Delphi method, Triangulation (Dalio, 2019),

- Premortem (Klein, 2007) and

- Pre-commitment.

This is far from an exhaustive list. An increasing stream of the literature, including Grant (2021), Johnson (2018), Heath (2013) and Kahneman (2021) has provided scientifically sound but very practical procedures and checklists to reduce various biases and unnecessary noise in our decisions.

The (financial) business community is also slowly but surely starting to use these techniques more and more. This is apparent from, among other things, the increasing flow of publications in this area by banks and asset managers, as well as the increasing number of behavioral researchers and behavioral risk managers at financial institutions and regulatory and supervisory bodies.

HOW WILL THE FINANCE PROFESSIONAL OF THE FUTURE WORK?

Among other things, the above implies that the finance professional must be very critical of assumptions and be well aware of how relevant the incorrectness of the assumptions can be for the results of the model. The standard models that make extensive use of statistics, such as Value at Risk and Asset and Liability Models, can provide insights into changes in a risk profile over time. However, these models provide little insight into the absolute risks. We can only imagine extreme risks in a dynamic system and not express them in probabilities. This makes embracing different models relevant in understanding under which circumstances certain regulations, centralization of activities and so forth can be counterproductive. Different models will also better prepare financial institutions for the next major crisis. Various scenario tools including stress testing, longterm worldviews, pre-mortem and gaming tools, are part of the diverse group of models that should be applied. When applied in a structured way, these tools make companies more adaptive to change. We need to focus on consequences of scenarios and related actions: both in terms of actions now and precommitments, conditionally on certain deeply conceptualized scenarios. Imagination is key. It’s not a surprise that the saying goes that the root cause of any crisis is a failure of imagination.

WE NEED TO FOCUS ON CONSEQUENCES OF SCENARIOS AND RELATED ACTIONS, BOTH ACTIONS NOW AND PRE-COMMITMENTS, CONDITIONALLY ON CERTAIN DEEPLY CONCEPTUALIZED SCENARIOS

An important competence of the risk managers, portfolio managers and supervisors of the future is the ability to deal with ambiguity that is simply part of fundamental uncertainty. This will enable a multidisciplinary thinker to function well in a world that embraces complexity. Certainly at a senior level, it is a crucial quality to be able to lead a group process well and – instead of dominating it – to get the right information to the surface via various de-biasing processes. A company like Bridgewater has been selecting its people for this characteristic for decades.

An important competence of the risk managers, portfolio managers and supervisors of the future is the ability to deal with ambiguity that is simply part of fundamental uncertainty. This will enable a multidisciplinary thinker to function well in a world that embraces complexity. Certainly at a senior level, it is a crucial quality to be able to lead a group process well and – instead of dominating it – to get the right information to the surface via various de-biasing processes. A company like Bridgewater has been selecting its people for this characteristic for decades.

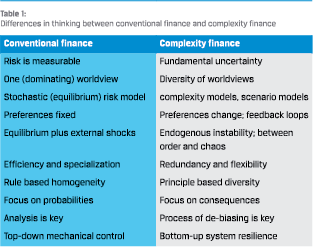

In summary, the more conventional finance approach and the emerging complexity approach can be contrasted as shown in Table 1. However, it should be noted that the table is an exaggeration, and both approaches regularly (partly) embrace aspects of the other paradigm.

A FUNDAMENTALLY UNCERTAIN PARADIGM SHIFT

Of course, the future perspectives discussed above are not the only changes that are imminent in the field of finance. For example, much attention will be paid to the better integration of climate change, geopolitical developments and demographic changes in risk management. In addition, improved data analytics and artificial intelligence will play a greater role in more “complicated” risk areas such as (parts of) credit risk and insurance.

However, the claim in the current article is that the complexity theory approach and related areas will become more dominant. Whether and how quickly the transition to a different way of working, one that is based on more pluralist models, less rulebased regulations and better decision-making processes, will take place within education, financial institutions and regulators is also an unpredictable social phenomenon of a paradigm shift. Any assertion here would contradict the previous analysis about the unpredictability of critical tipping points in complex systems.

Twenty years ago, physicist Stephen Hawkins suggested that the twenty-first century could well be the century of complexity theory. It would be great if this new paradigm could soon find its way into the practice of the risk and finance professional. This does not magically lead our institutions to be “in control”, but it will make them more resilient and adaptive. And that is exactly what is needed in a complex world.

Literature

- Arthur, W., 2013, Complexity Economics: A different framework for economic thought. https://www.santafe.edu/research/ results/working-papers/ complexity-economics-a-different-framework-for-eco

- Battiston, S., M. Puliga, R. Kaushik, 2012, DebtRank: Too Central to Fail? Financial Networks, the FED and Systemic Risk. Nature Scientific Reports, 541 (2012). https://doi.org/10.1038/srep00541

- Borovkova, S and L. El Mouttalibi,, 2013, Systemic Risk and Centralized Clearing of OTC Derivatives: A Network Approach SSRN: https://ssrn.com/abstract=2334251

- Broeders, D., H. Loman and J. van Toor, 2018, A methodology for actively managing tail risks and uncertainties. Journal of Risk Management in Financial Institutions Vol. 12 pp 44-46.

- Dalio, R., 2017, Principles. Life and work, New York

- De Grauwe, P., 2012, Lectures on Behavioral Macroeconomics, Princeton

- De Haan, J., Z. Jin and C. Zhou, 2019, Microprudential regulation and banks’ systemic risk DNB Working Paper no 656, October 2019.

- Dekker, S., 2017, The Safety Anarchist, Farnham

- Farmer, D and D. Foley, 2009, The economy needs agent-based modelling Nature 460, pages 685-686

- Grant, A., 2021, Think Again. The Power of Knowing What You Don’t Know, New York

- Heath, C. and D. Heath, 2013, Decisive, New York

- Hollnagel, E., D. Woods and N. Leveson, 2006, Resilience Engineering, Farnham

- Hommes, C. and J. Vroegop, 2019, Contagion between asset markets: a two market heterogeneous agents model with destabilizing spillover effects, Journal of Economic Dynamics & Control 100, 314-333

- Johnson, S., 2018, Farsighted. How We Make the Decisions That Matter the Most, New York

- Kahneman, D and A. Tversky, 2000, Choices, Values and Frames., Cambridge

- Kahneman, D., O. Sibony and C. Sunstein, 2021, Noise, New York

- Keynes, J., 1937, The General Theory of Employment, Quarterly Journal of Economics, Vol 51. No 2, February 1937, pp 214.

- Klein, G., 2007, Performing a Project Premortem, Harvard Business Review September 2007 https://hbr.org/2007/09/ performing-a-project-premortem

- Klerkx, R. and A. Pelsser, 2022, Narrative-based robust stochastic optimization. Working paper. Feb 2022 Accepted for publication by Journal of Economic Behavior and Organization.

- Kuhn, Th., 1962, The Structure of Scientific Revolutions, Chicago

- Lovallo, D. and O. Sibony, 2010, The Case For Behavioral Strategy, McKinsey Quarterly, March 2010

- Lucas, R., 2003, Macroeconomic Priorities, American Economic Review Vol 93, No 1, March 2003, pp 1-14

- Minsky, H., 1986, Stabilizing an unstable economy, New York

- Shiller, R., 2019, Narrative Economics. How Stories Go Viral and Drive Major Economic Events, Princeton

- Slovic, P., 2000, The Perception of Risk, Abingdon-on-Thames

- Tetclock, P. and D. Gardner, 2015, Superforecasting: The art and Science of Prediction., London.

- Tieleman, J. et al (Rethinking Economics Netherlands) “Thinking Like an Economist?” (available at https://www.rethinkingeconomics.nl/

- Van der Heijden, K., 2004, Scenarios: The Art of Strategic Conversation, 2nd edition, New York

Notes

- A positive feedback loop is a feedback mechanism in which the factors reinforce each other, so there is no stabilization but instead destabilization with often negative consequences. A negative feedback loop is stabilizing. The terms “positive” and “negative” are often confusing here.

- Complexity Theory seems to have been “born” when the Santa Fe Institute (see www.santafe.edu) was founded in 1984. Especially starting in 1990s, a large group of top scientists (including several Nobel Prize winners, among others the economist Kenneth Arrow) from various disciplines started to work together with successful results. They conducted research into emergent properties of complex adaptive systems in biology, physics, economics and so forth.

- Google Books Ngram Viewer shows that the use of words like Agent Based Modelling, Complexity Economics and Network Theory shows a growth of 300% to 1000% from roughly the beginning of the millennium. The use of words such as Neo-Classical Theory and Equilibrium models has been structurally declining since the beginning of this century. Value at Risk, introduced in the 1990s, has gradually declined since the 2007 crisis.

- Examples include the Institute for New Economic Thinking (INET) at the Oxford Martin School, London School of Economics (LSE) Complexity Group, University of Amsterdam Center for Non-Linear Dynamics in Economics and Finance (CENDEF) and the University of Groningen Center for Social Complexity Studies (GCCS). An example of a multiform economics curriculum is CORE (Curriculum Open-access Resources in Economics www.core-econ.org). The co-founder is Sam Bowles of the Santa Fe Institute.

- In a distribution that follows a power law, a relative change in one quantity implies a proportional relative change in the other quantity.

- In practice, a power law probability distribution does not even have a finite standard deviation, which makes it difficult for financial professionals to work with it. In addition, the parameters are difficult to estimate, because it requires many observations in the “tail”. This requires data going back decades into the past. However, the system has changed so much over time that these data no longer represent the system.

- See for example (IMF Working Paper WP/18/39 “How Well Do Economists Forecast Recessions?” Z. An, Tovar Jalles, J., Loungani P., March 2018. See further analysis on the predictive qualities of “experts” in Tetlock (2015).

- In this context, it is strange that economists advise not to put all your wealth in the stock of one company, but to entrust all your wealth to one world view.

- Optimization takes place in many stochastic models – so with one single world view – which makes the outcome fragile for that world view. There are, however, models for “robust optimization”. Klerkx (2022) showed that by including different world views (scenario sets) and by performing a mini-max optimization (with a kind of game-theoretical concept that the “opponent” is allowed to determine which scenario is used), the resulting solutions are more robust to different worldviews.

in VBA Journaal door Theo Kocken